In July of 2021, floodwaters from the Meuse and Rhine rivers and their many tributaries ripped through central Germany, destroying homes and businesses and taking the lives of over 150 people.

Despite accurate forecasts by German scientists, many residents lost their lives that day because early warning systems failed to notify them: The technology powering these systems had failed, and people who should have heard sirens or received attention-grabbing phone alerts simply went about their daily tasks, unaware of the danger bearing down on them.

For over a decade, I helped develop and implement flood-warning systems (FWSs) in the United States. During these years, I learned to appreciate the importance of real-time data, data fidelity, and system resilience.

For over a decade, I built flood-warning systems (FWSs). I learned to appreciate the importance of real-time data, data fidelity, and system resilience.

FWSs are a complex web of various components, including weather sensors, communications networks, data processing software, and notification systems. Interlinking this web are Application Programming Interfaces (APIs) that allow these components to intercommunicate with each other. Often, the applications and services that notify citizens of impending disasters are built on top of public APIs that provide weather data or notification services.

It goes without saying that these public APIs must be rock solid. It’s truly life or death.

In this blog post, I’ll explain the 8 components that must be built and maintained to support modern and resilient data APIs such as those that power public FWSs. I’ll also explain the ways Tinybird can bolster your API's resilience and simplify your API development by managing many of these components for you.

Of course, this is not only limited to public warning systems like FWSs. Any public or user-facing API, whether it's powering a critical feature at your SaaS or notifying a population about impending disaster, should be designed for high fidelity and resiliency.

In this blog post, I’ll explain the 8 components that must be built and maintained to support modern and resilient data APIs, and how Tinybird bolsters and simplifies your API design.

In a follow-up post, I’ll walk through an example API design for publishing weather data in near real-time, and then show you how to implement it with Tinybird.

The 8 considerations for building a public data API

During my time as an FWS developer, I worked on teams that designed, implemented, and supported a public API for serving weather data. Back then, we had to essentially build the API from scratch. We hosted our own databases and leased a server rack containing our own hardware.

From that experience, I learned that deploying and supporting real-time, public APIs comes with a long list of concerns that demand attention and expertise. When you create an API from scratch, you’ll need to master each of its various components to make it all work and keep it stable.

When you create an API from scratch, you must master each of its various components to keep it stable.

Specifically, you must carefully consider 8 key factors when designing and deploying an API that handles high volumes of data and requests. Those 8 things are:

- Designing the API ->

- Deciding on a programming language and web framework ->

- Managing your data storage and optimizing performance ->

- Managing horizontal scaling and load balancing ->

- Selecting a hosting platform (or hosting yourself) ->

- Implementing rate limiting ->

- Monitoring performance and optimizing ->

- Securing the API ->

Here I'll dig a little deeper into each one.

Designing the API

The design process starts with determining what various endpoints comprise your API. You will also decide what query parameters each of those endpoints require. What data do you need to serve? What are the use cases for your API? How should people and applications expect to interact with it? What is an acceptable level of downtime? When I was designing APIs for flood warning systems, we used Java-based tools and the iteration process was very time-consuming, with end-to-end testing requiring multi step deployments. Designing effectively upfront can mitigate mid-implementation design changes that slow your development speed.

Deciding on a programming language and web framework

You’ll need to select a programming language and framework that is well-suited for building high-performance APIs. Popular choices include Node.js, Ruby on Rails, Python with Django or Flask, and Java with Spring. You’ll need to consider all the tradeoffs of each language and associated frameworks, including your varying levels of familiarity with each one.

Managing your data storage and optimizing performance

Data APIs expose data stored in an underlying database. The scalability of your API will depend heavily on the database you choose and the way you query it. You’ll need to understand things like indexing, transactions, sharding, replication, caching, and query optimization. You’ll have to factor in concurrency constraints and latency requirements. And that’s without even considering how you’ll host and maintain the database. When I developed FWSs, the architecture was based on single-tenant MySQL databases, and even that simple set-up demanded DBA skills for managing replicas, partitioning, SQL efficiencies, and hardware and software upgrades. I would have much preferred if these things had been managed for us.

Managing horizontal scaling and load balancing

As your usage scales, your API will need to scale with it. You’ll need to build mechanisms to scale as demand increases. That means understanding and implementing load balancing to distribute incoming requests across multiple servers to avoid overloading any one server. The FWSs that I built were mostly regional systems with relatively low request volumes (hundreds of requests per hour). To scale our design back then to, say, a national system would have posed significant technical challenges.

Selecting a hosting platform (or hosting yourself)

You need hardware to store your data and process the computing tasks required by your API, and most likely you’ll choose a public cloud to simplify. Still, you’ll need to be well-versed in the different options offered by cloud-based hosting platforms such as Google Cloud Platform, Amazon Web Services, or Microsoft Azure.

Implementing rate limiting

Don’t let a DDoS attack bring down your API. You’ll need to implement rate limiting to prevent users from making too many requests too quickly, whether accidentally or nefariously. Failure to handle rate limiting intelligently can degrade user experience (at best), send your hosting bill through the roof, or completely bring down your API (at worst).

Monitoring performance and optimizing

You’ll need to continuously monitor the performance of the API and optimize as necessary to keep metrics like p95 latency at acceptable levels even at scale. You need to know how to wield tools like Grafana, New Relic, Datadog, or AWS CloudWatch to monitor performance and identify bottlenecks.

Securing the API

Security extends far beyond preventing DDoS through rate limiting. Your API will need security measures to protect against attacks and data breaches. That means you need to know best practices and implementation details for authentication, authorization, and encryption.

Overall, designing and deploying an API requires a combination of functional design, backend engineering, and the use of best practices in software development and infrastructure management. When you are at the drawing board, these concerns can feel overwhelming, especially when the stakes are high as with an FWS.

The good news is that Tinybird can help manage many of these so you do not have to take it all on yourself.

How does Tinybird help you build a resilient data API?

When I first learned of Tinybird, the notion of using its features to build and support a real-time API caught my attention. I wondered how Tinybird might have impacted the approach I once took building mission-critical APIs for flood-warning systems.

I began to think of Tinybird as a platform that enables developers to easily design, deploy, and support data APIs at scale. Tinybird makes it straightforward to ingest your data, store it, analyze it, transform it, and make it available via dynamic, scalable, secure, and documented API endpoints.

When I learned about Tinybird, I wondered how it might have changed the way I built FWSs. I began to think of Tinybird as a platform that makes it easy to design, deploy, and support data APIs at scale.

Here are some specific ways that Tinybird simplifies data API development:

Tinybird is a ready-made real-time backend.

Tinybird is a hosted, highly available backend that abstracts away things like data storage, hosting, and web frameworks. The platform was designed to minimize the latency between a new data point being generated (by something like an FWS sensor) and it being available to query through an API endpoint.

Tinybird offers many ways to ingest your data.

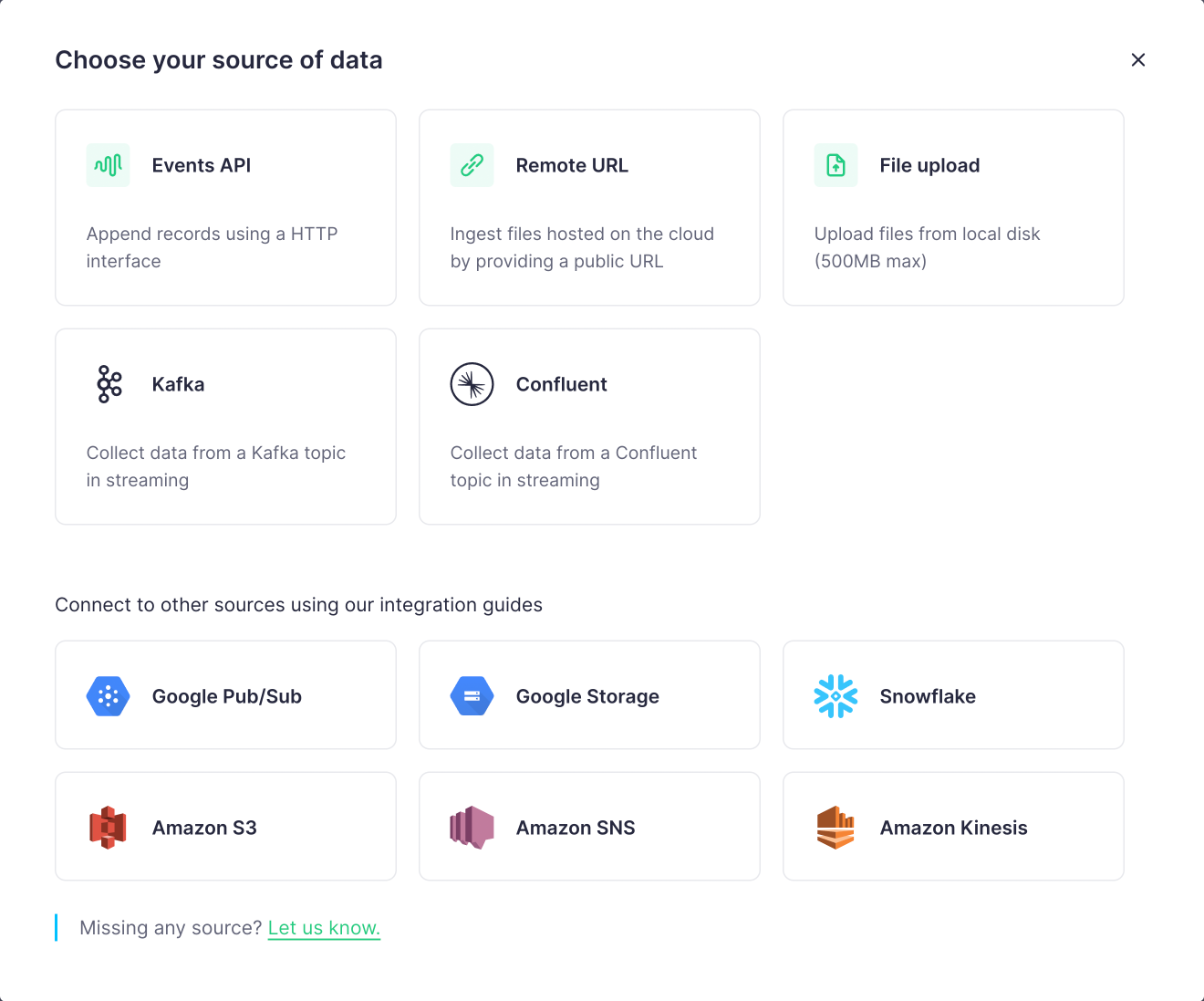

Regardless of where and how your data is generated, the first step to building a data API is capturing and storing the data that its endpoints query. Tinybird makes it quite simple to ingest data from many sources through various methods, including:

- Pushing data into Tinybird with the Events API, a high-frequency ingestion HTTP endpoint that accepts JSON payloads and writes them to storage at thousands of events per second.

- Consuming real-time data streams, such as Kafka-based streams, using native connectors.

- Scheduling table syncs from data warehouses such as BigQuery and Snowflake, again using native connectors.

- Uploading remote and local files in CSV, NDJSON, and Parquet formats.

Tinybird enables real-time data ingestion from many different sources, including streams, tables, warehouses, files, or directly from an application.

You can learn more about data ingestion with Tinybird here.

Tinybird manages data storage.

Tinybird stores and manages data with ClickHouse, an open source database optimized for real-time data processing and analysis. As an OLAP datastore, ClickHouse is designed to handle billions of rows and process hundreds of gigabytes of data per second.

Tinybird stores data with ClickHouse, which is optimized for real-time processing over large datasets.

ClickHouse is a powerful database, but it can be difficult to deploy and host. Tinybird simplifies ClickHouse deployment, so your API can easily scale as needed without requiring that you manage infrastructure along the way.

Tinybird uses SQL to filter and transform data.

Tinybird queries are constructed using SQL, a familiar language for most. Furthermore, Tinybird simplifies query development with Pipes, a way to break up larger SQL queries into simpler, composable nodes.

Each node can be monitored and optimized for performance and errors, making debugging much simpler and more efficient. You can learn more about Pipes here.

Additionally, Tinybird offers an easy-to-use templating language that allows you to interleave query parameters directly in your SQL. When you publish your queries as endpoints, your API documentation will automatically include any parameters you define.

Here's an example SQL snippet that I'm working on for the second post in this series, and you can see how the {{}} define my query parameter called sensor_type.

Tinybird handles horizontal scaling and load balancing.

Tinybird is serverless, and it scales to provide the bandwidth needed to support the APIs you publish for high levels of concurrency at low latency. Tinybird’s shared infrastructure routinely maintains four to five 9s of system uptime, and Enterprise customers enjoy dedicated clusters that can be even more resilient.

Tinybird simplifies and speeds design iterations.

With the wrong tooling, design changes during development can really slow you down. With Tinybird, you can iterate using a dashboard UI (or command-line interface) and immediately see and use the result. One of the joys of Tinybird is being able to design and iterate so easily without worrying about how iterations might slow you down.

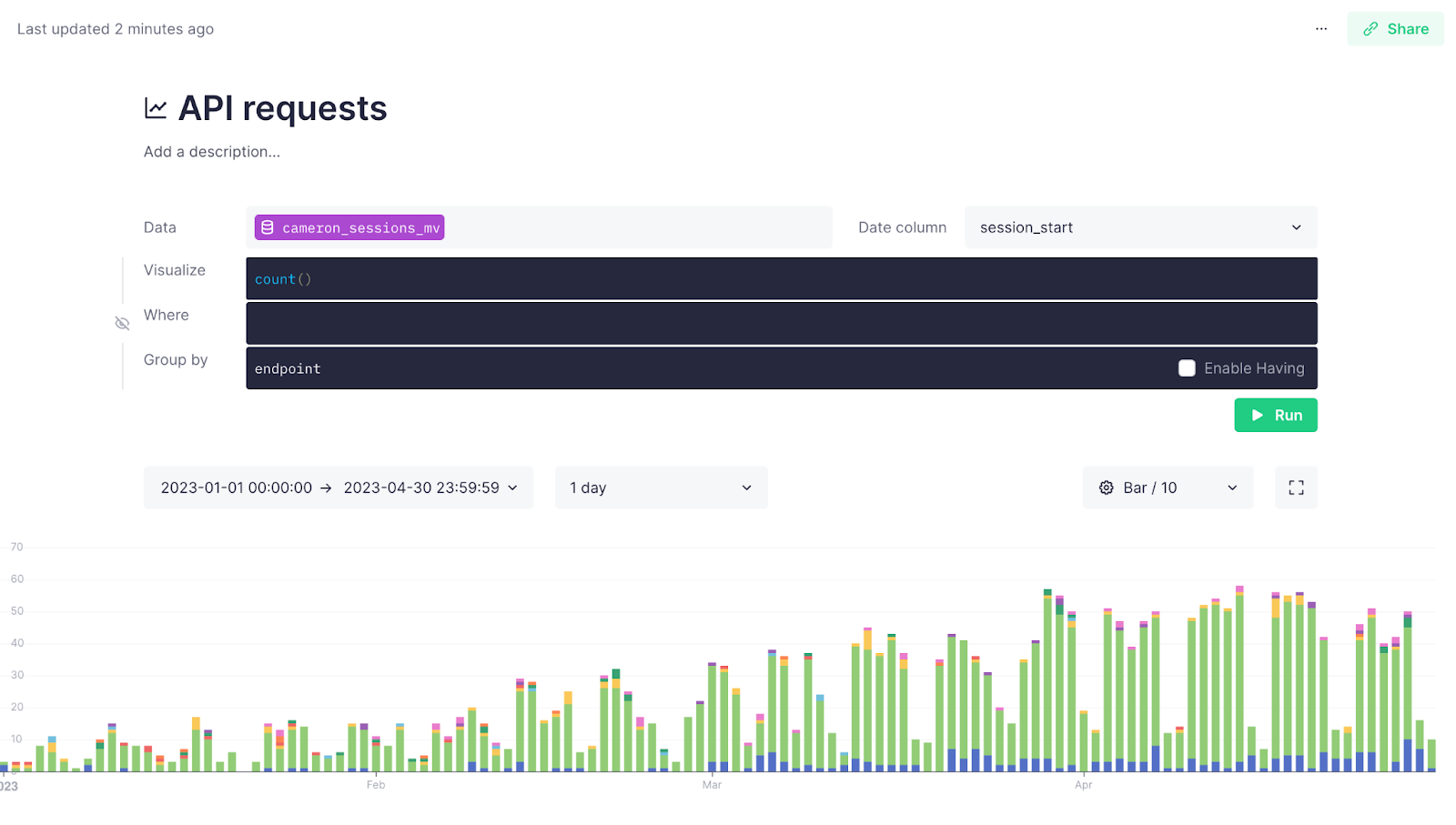

Tinybird includes systems logs and monitoring tools.

Tinybird includes built-in observability tools to monitor API performance. Tinybird logs all API requests and responses, including event timestamps, response codes and durations, and the amount of processed data into Service Data Sources that you can query as needed.

In addition to API monitoring, Tinybird Service Data Sources allow you to closely monitor data ingestion to make sure the foundation of your API stays strong.

Tinybird handles authentication and authorization, with support for row-level security.

Tinybird gives you full control over authentication and authorization through user tokens with prescribed permissions. User tokens can be created and managed for all your resources in Tinybird, including Data Sources, Pipes, and API Endpoints, and you can use SQL statements to set very specific row-level permission scopes. You can learn more about token management here.

By providing all of these features, Tinybird makes it much simpler to build resilient APIs at scale. It virtually eliminates concerns about storage, hosting, frameworks, development languages, security, and observability.

By taking all of these considerations off of your plate, Tinybird frees you to focus on API design, implementation, and optimization. You can much more quickly and efficiently build scalable, resilient APIs over tremendous volumes of data.

Summary

I hope that this post has helped you better understand the essential building blocks of data APIs, the importance of each component for ensuring resiliency and reliability in your APIs, and how Tinybird can simplify your data API development.

Creating a data API involves many steps, such as designing endpoints, selecting suitable programming languages and frameworks, optimizing data storage and performance, implementing caching and load balancing, and securing the API. If you can handle each of these steps effectively, then you can create robust data APIs to power user-facing features that range from real-time personalization to smart inventory management to flood warning systems.

Building a resilient data API requires that you manage 8 critical components. Tinybird takes many of these off your plate so you can focus on API design and performance optimization.

Tinybird helps you by simplifying and managing many of these tasks. It’s a platform with built-in backend architecture, authentication, data ingestion and storage, SQL query builders, load balancing, monitoring, and API hosting.

With Tinybird, developers can concentrate on designing data API features and optimizing performance, while the platform handles the more complex and mundane aspects of implementing and maintaining APIs.

How do I get started?

Ready to build a resilient data API with Tinybird? Try Tinybird today, for free. Get started with the Build Plan - which is more than enough for most simple projects and has no time limit - and upgrade as you scale.

If you want to learn more about the different ways that Tinybird supports and simplifies API development, check out the documentation, specifically these resources below: